Navi vs. Turing: An Architecture Comparison

You've followed the rumors and ignored the hype; you waited for the reviews and looked at all the benchmarks. Finally, you lot slapped downward your dollars and walked away with one of the latest graphics cards from AMD or Nvidia. Inside these, lies a large graphics processor, packed with billions of transistors, all running at clock speeds unthinkable a decade ago.

You're really happy with your purchase and games never looked nor played better. Only you might just exist wondering what exactly is powering your make new Radeon RX 5700 and how dissimilar is it to the bit in a GeForce RTX.

Welcome to our architectural and characteristic comparison of the newest GPUs from AMD and Nvidia: Navi vs Turing.

Anatomy of a Modernistic GPU

Before nosotros begin our breakup of the overall chip structures and systems, let's take a look at the basic format that all modernistic GPUs follow. For the most office, these processors are merely floating signal (FP) calculators; in other words, they practice math operations on decimal/fractional values. Then at the very least, a GPU needs to accept i logic unit dedicated to these tasks and they're usually called FP ALUs (floating signal arithmetics logic units) or FPUs for brusque. Not all of the calculations that GPUs practise are on FP data values, so in that location will also exist an ALU for whole number (integer) math operations or it might even be the aforementioned unit, that but handles both information types.

Now, these logic units are going to need something to organize them, past decoding and issuing instructions to go along them busy, and this volition exist in the form of at least i dedicated group of logic units. Unlike the ALUs, they won't be programmable by the finish user; instead, the hardware vendor will ensure this process is managed entirely by the GPU and its drivers.

To store these instructions and the data that needs to exist processed, at that place needs to be some kind of memory structure, besides. At its simplest level, it will exist in two forms: cache and a spot of local memory. The former will be embedded into the GPU itself and will be SRAM. This kind of memory is fast but takes up a relative big amount of the processor's layout. The local memory will be DRAM, which is quite a scrap slower than SRAM and won't unremarkably be put into the GPU itself. Most of the graphics cards we run into today have local memory in the form of GDDR DRAM modules.

Finally, 3D graphics rendering involves boosted set tasks, such as forming triangles from vertices, rasterizing a 3D frame, sampling and blending textures, and so on. Like the instruction and control units, these are fixed function in nature. What they practise and how they operate is completely transparent to users programming and using the GPU.

Let's put this together and make a GPU:

The orange block is the unit of measurement that handles textures using what are chosen texture mapping units (TMUs) - TA is the texture addressing unit of measurement -- it creates the retention locations for the cache and local memory to use -- and TF is the texture fetch unit that collects texture values from memory and blends them together. These days, TMUs are pretty much the same beyond all vendors, in that they can address, sample and alloy multiple texture values per GPU clock bicycle.

The block beneath it writes the color values for the pixels in the frame, every bit well every bit sampling them back (PO) and blending them (Lead); this block also performs operations that are used when anti-aliasing is employed. The proper name for this cake is return output unit or render backend (ROP/RB for brusque). Like the TMU, they're quite standardized now, with each one comfortably handling several pixels per clock cycle.

Our basic GPU would be atrocious, though, even by standards from 13 years agone. Why?

There's just one FPU, TMU, and ROP. Graphics processors in 2006, such as Nvidia's GeForce 8800 GTX had 128, 32, and 24 of them, respectively. So permit'south start to practice something almost that....

Similar whatever good processor manufacturer, we've updated our GPU past adding in some more units. This means the chip will be able to procedure more instructions simultaneously. To aid with this, we've as well added in a bit more cache, but this time, right side by side to the logic units. The closer cache is to a calculator construction, the quicker it tin become started on the operations given to it.

The trouble with our new design is that in that location's however only one control unit of measurement handling our actress ALUs. It would be better if nosotros had more blocks of units, all managed by their own dissever controller, as this would mean nosotros could have vastly different operations taking place at the same time.

Now this is more like information technology! Separate ALU blocks, packed with their own TMUs and ROPs, and supported by dedicated slices of tasty, fast enshroud. In that location'south yet simply 1 of everything else, only the basic structure isn't a million miles abroad from the graphics processor we see in PCs and consoles today.

Navi and Turing: Godzilla GPUs

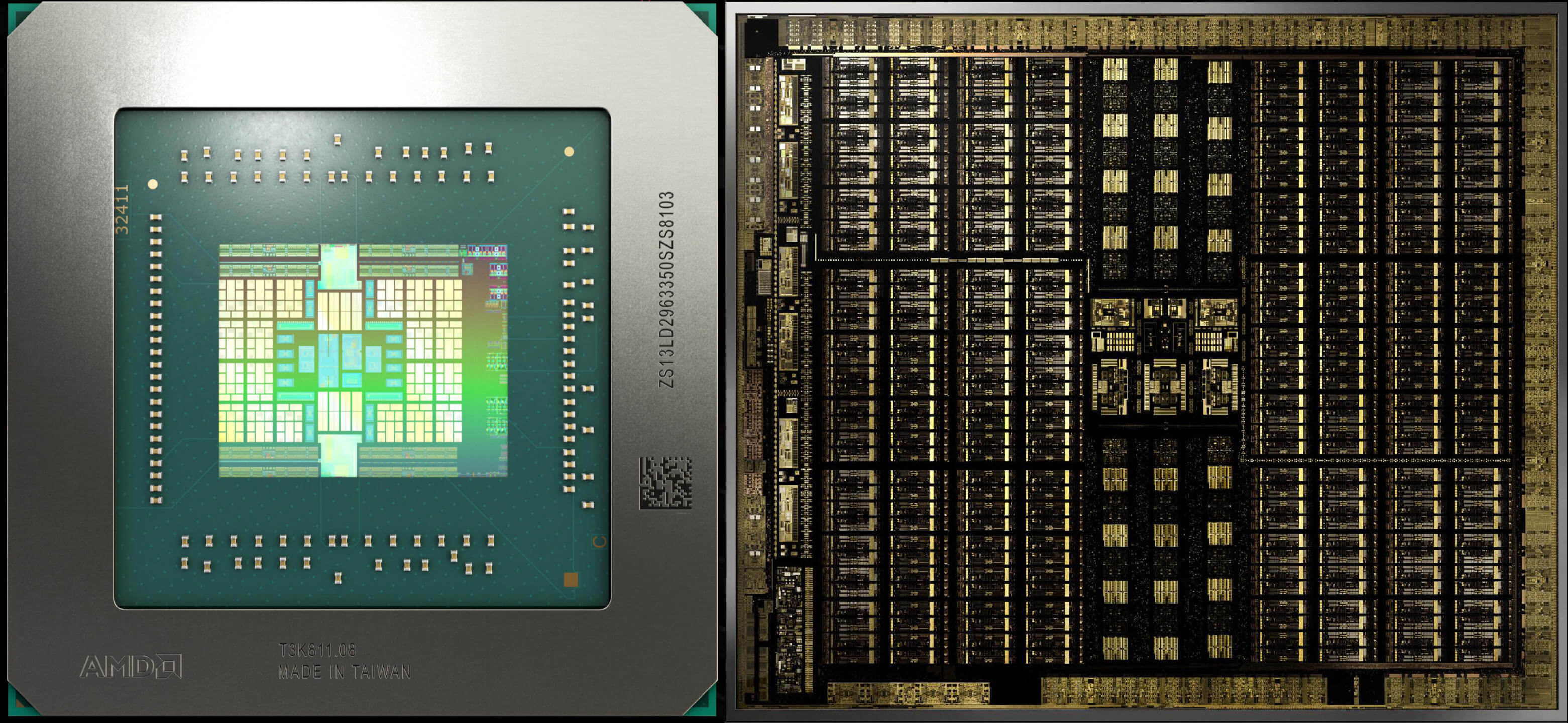

Now that we have described the bones layout of a graphics chip, let'south outset our Navi vs. Turing comparing with some images of the actual fries, albeit somewhat magnified and candy to highlight the various structures.

On the left is AMD's newest processor. The overall chip design is called Navi (some folks call it Navi x) and the graphics compages is called RDNA. Next to information technology, on the correct, is Nvidia's full size TU102 processor, sporting the latest Turing architecture. It'due south important to note that these images are not to calibration: the Navi die has an area of 251 mm2, whereas the TU102 is 752 mm2. The Nvidia processor is big, just it's not 8 times bigger than the AMD offering!

They're both packing a gargantuan number of transistors (10.3 vs 18.6 billion) simply the TU102 has an average of ~25 million transistors per square mm compared to Navi's 41 one thousand thousand per square mm.

This is considering while both chips are fabricated by TSMC, they're manufactured on unlike process nodes: Nvidia's Turing is on the mature 12 nm manufacturing line, whereas AMD's Navi gets manufactured on the newer 7 nm node.

Simply looking at images of the dies doesn't tell us much about the architectures, so allow'due south take a look at the GPU block diagrams produced by both companies.

The diagrams aren't meant to be a 100% realistic representation of the bodily layouts merely if you rotate them through xc degrees, the various blocks and key strip that are credible in both can be identified. To showtime with, we tin see that the two GPUs have an overall structure like ours (admitting with more of everything!).

Both designs follow a tiered approach to how everything is organised and grouped -- taking Navi to begin with, the GPU is built from two blocks that AMD calls Shader Engines (SEs), that are each split up into another 2 blocks called Asynchronous Compute Engines (ACEs). Each one of these comprises 5 blocks, titled Workgroup Processors (WGPs), which in plow consist of 2 Compute Units (CUs).

For the Turing blueprint, the names and numbers are different, simply the hierarchy is very similar: 6 Graphics Processing Clusters (GPCs), each with 6 Texture Processing Clusters (TPCs), with each of those congenital upwardly of 2 Streaming Multiprocessor (SM) blocks.

If you motion-picture show a graphics processor every bit being a large manufactory, where dissimilar sections manufacture dissimilar products, using the aforementioned raw materials, then this organization starts to make sense. The manufacturing plant's CEO sends out all of the operational details to the business organisation, where it then gets dissever into various tasks and workloads. By having multiple, contained sections to the factory, the efficiency of the workforce is improved. For GPUs, information technology's no different and the magic keyword here is scheduling.

Front and Center, Soldier -- Scheduling and Dispatch

When we took a await at how 3D game rendering works, we saw that a graphics processor is really nothing more than a super fast reckoner, performing a range of math operations on millions of pieces of information. Navi and Turing are classed as Single Education Multiple Data (SIMD) processors, although a better description would exist Single Educational activity Multiple Threads (SIMT).

A modern 3D game generates hundreds of the threads, sometimes thousands, every bit the number of vertices and pixels to exist candy is enormous. To ensure that they all become washed in only a few microseconds, it's important to have equally many logic units as decorated as possible, without the whole matter stalling because the necessary information isn't in the right place or there's not plenty resources infinite to piece of work in.

When we took a look at how 3D game rendering works, nosotros saw that a graphics processor is really null more than than a super fast calculator, performing a range of math operations on millions of pieces of information. Navi and Turing are classed every bit Single Instruction Multiple Data (SIMD) processors, although a better description would be Single Educational activity Multiple Threads (SIMT).

Navi and Turing work in a similar manner whereby a central unit takes in all the threads and then starts to schedule and consequence them. In the AMD fleck, this function is carried out by the Graphics Control Processor; in Nvidia's, it's the GigaThread Engine. Threads are organized in such a fashion that those with the same instructions are grouped together, specifically into a collection of 32 threads.

AMD calls this collection a moving ridge, whereas Nvidia call information technology a warp. For Navi, one Compute Unit of measurement tin handle 2 waves (or one 64 thread wave, but this takes twice every bit long), and in Turing, ane Streaming Multiprocessor works through 4 warps. In both designs, the wave/warps are independent, i.e. they don't need the others to finish before they can start.

Then far and so, there's not a whole lot different betwixt Navi and Turing -- they're both designed to handle a vast number of threads, for rendering and compute workloads. We need to look at what processes those threads to see where the two GPU giants separate in design.

A Deviation of Execution - RDNA vs CUDA

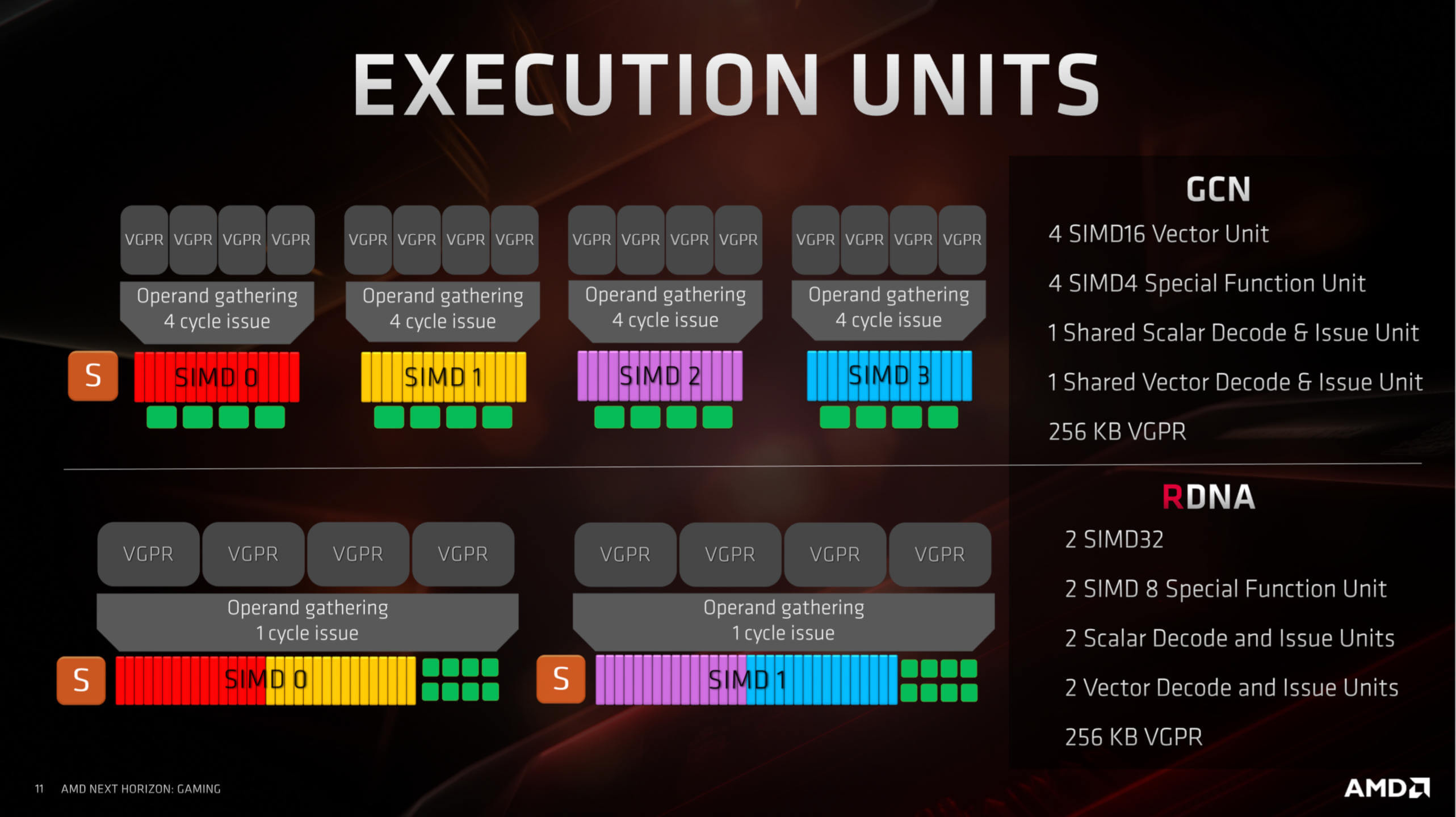

AMD and Nvidia take a markedly unlike approach to their unified shader units, fifty-fifty though a lot of the terminology used seems to exist the aforementioned. Nvidia's execution units (CUDA cores) are scalar in nature -- that ways i unit carries out one math operation on one data component; by dissimilarity, AMD's units (Stream Processors) work on vectors -- 1 operation on multiple data components. For scalar operations, they have a unmarried dedicated unit of measurement.

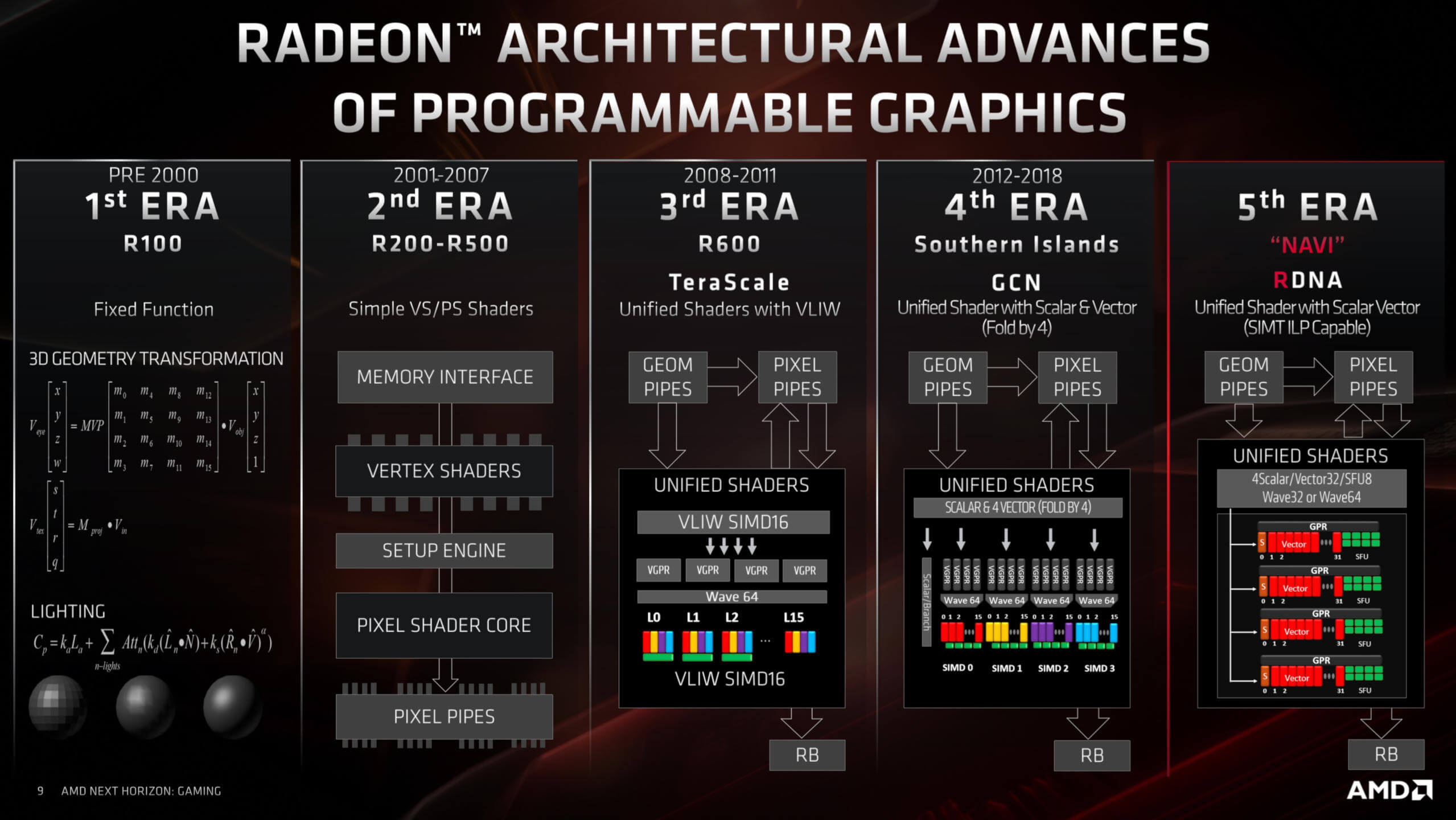

Before we take a closer look at the execution units, permit's examine AMD'south changes to theirs. For 7 years, Radeon graphics cards have followed an architecture called Graphics Cadre Next (GCN). Each new chip has revised various aspects of the pattern, merely they've all fundamentally been the same.

AMD has provided a (very) brief history of their GPU compages:

GCN was an evolution of TeraScale, a design that allowed for large waves to candy at the same time. The main result with TeraScale was that information technology merely wasn't very friendly towards programmers and needed very specific routines to get the best out of it. GCN fixed this and provided a far more accessible platform.

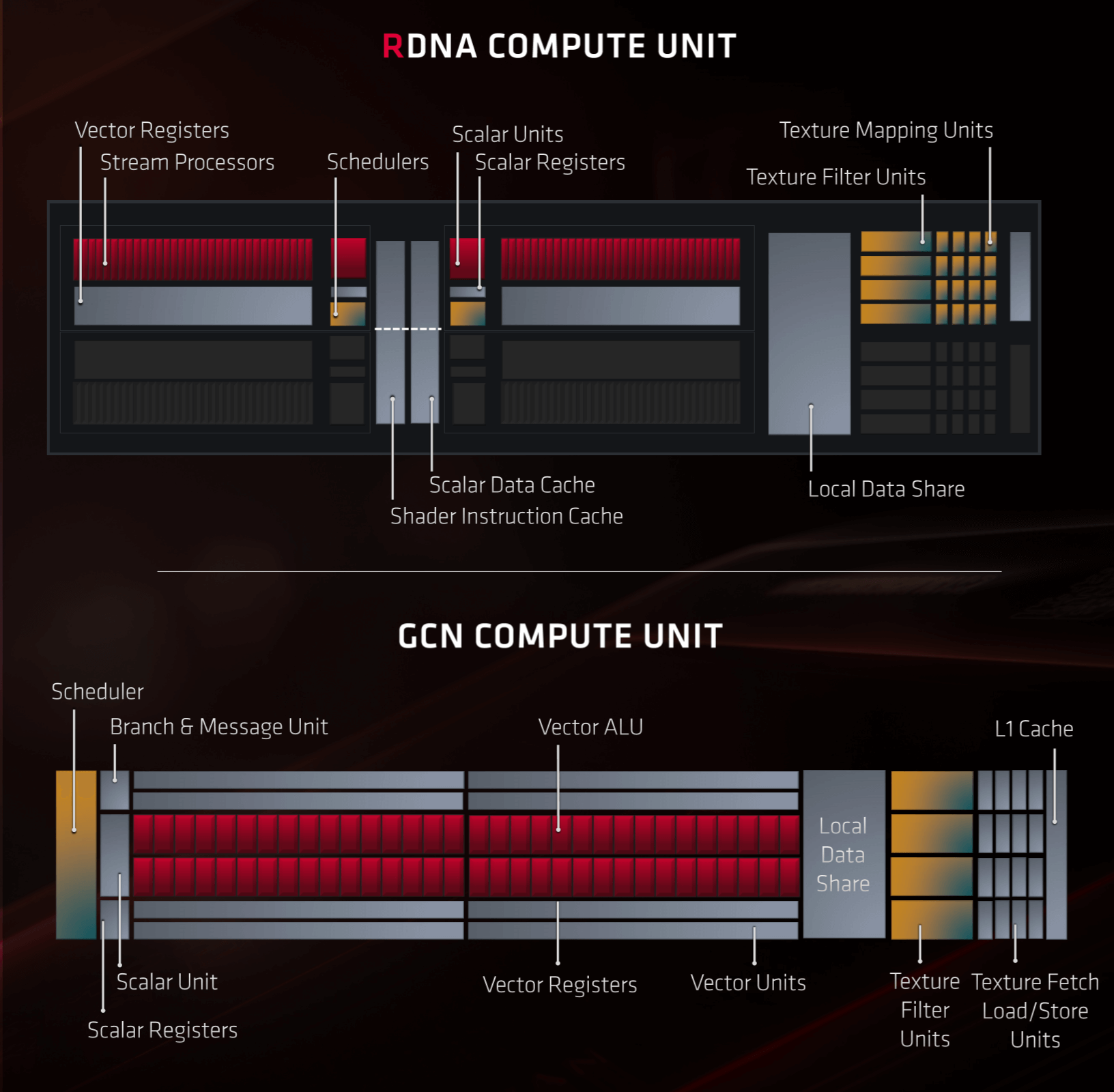

The CUs in Navi take been significantly revised from GCN equally role of AMD'southward comeback process. Each CU contains two sets of:

- 32 SPs (IEE754 FP32 and INT32 vector ALUs)

- 1 SFU

- 1 INT32 scalar ALU

- 1 scheduling and dispatch unit

Along with these, every CU contains four texture units. At that place are other units within, to handle the information read/writes from cache, but they're non shown in the image below:

Compared to GCN, the setup of an RDNA CU might seem to exist non very different, but it'southward how everything has been organized and arranged that'south of import here. To showtime with, each set of 32 SPs has its own dedicated educational activity unit, whereas GCN but had i schedule for four sets of 16 SPs.

This is an important change every bit it ways i 32 thread wave can be issued per clock cycle to each set of SPs. The RDNA architecture as well allows the vector units to handle waves of xvi threads at twice the rate, and waves of 64 threads at one-half the rate, so code written for all of the previous Radeon graphics cards is still supported.

For game developers, these changes are going to be very popular.

For scalar operations, there are at present twice as many units to handle these; the simply reduction in the number of components is in the form of the SFUs -- these are special office units, that perform very specific math operations, e.g. trigonometric (sine, tangent), reciprocal (ane divided by a number) and foursquare roots. There's less of them in RDNA compared to GCN but they tin now operate on information sets twice the size every bit before.

For game developers, these changes are going to be very popular. Older Radeon graphics cards had lots of potential performance, but borer into that was notoriously hard. Now, AMD has taken a large footstep forward in reducing the latency in processing instructions and too retained features to allow for backwards compatibility for all the programs designed for the GCN compages.

Simply what about for the professional graphics or compute market? Are these changes beneficial to them, too?

The short reply would be, yes (probably). While the electric current version of the Navi fleck as found in the likes of the Radeon RX 5700 XT, has fewer Stream Processors that the previous Vega design, we found it to outperform a previous-gen Radeon RX Vega 56 quite hands:

Some of this performance proceeds will come from the RX 5700 XT higher clock rate than the RX Vega 56 (and then it can write more pixels per second into the local memory) but it's down on peak integer and floating point operation past as much as 15%; and all the same, nosotros saw the Navi scrap outperform the Vega by as much equally xviii%.

Professional rendering programs and scientists running complex algorithms aren't exactly going to be blasting through a few rounds of Battlefield V in their jobs (well, possibly...) but if the scalar, vector, and matrix operations done in a game engine are being processed faster, then this should translate into the compute market. Correct at present, we don't know what AMD'due south plans are regarding the professional person marketplace -- they could well go along with the Vega architecture and keep refining the design, to assistance manufacturing, merely given the improvements in Navi, it makes sense for the company to move everything onto the new architecture.

Nvidia's GPU blueprint has undergone a similar path of evolution since 2006 when they launched the GeForce 8 series, albeit with fewer radical changes than AMD. This GPU sported the Tesla architecture, one of the first to use a unified shader arroyo to the execution compages. Beneath nosotros tin can run across the changes to the SM blocks from the successor to Tesla (Fermi), all the way through to Turing'south predecessor (Volta):

As mentioned earlier in this article, CUDA cores are scalar. They can carry out one float and one integer didactics per clock cycle on one information component (note, though, that the teaching itself might take multiple clock cycles to be processed), but the scheduling units organize them into groups in such a manner that, to a programmer, they can perform vector operations. The nearly pregnant change over the years, other than there only being more than units, involves how they are arranged and sectioned.

In the Kepler design, the full chip had 5 GPCs, with each 1 housing 3 SM blocks; by the time Pascal appeared, the GPCs were dissever into detached sections (TPCs) with two SMs per TPC. Just like with the Navi blueprint. this fragmentation is important, as it allows the overall GPU to be as fully utilized as possible; multiple groups of independent instructions tin can be processed in parallel, raising the shading and compute performance of the processor.

Let's take a look at the Turing equivalent to the RDNA Compute Unit:

One SM contains iv processing blocks, with each containing:

- 1 instruction scheduling and dispatch unit

- 16 IEE754 FP32 scalar ALUs

- 16 INT32 scalar ALUs

- 2 Tensor cores

- iv SFUs

- 4 Load/Store units (which handle cache read/writes)

At that place are also 2 FP64 units per SM, merely Nvidia doesn't show them in their cake diagrams anymore, and every SM houses four texture units (containing texturing addressing and texturing filtering systems) and 1 RT (Ray Tracing) core.

The FP32 and INT32 ALUs tin can work concurrently and in parallel. This is an important characteristic because even though 3D rendering engines require mostly floating point calculations, there is still a reasonable number of simple integer operations (east.1000. information address calculations) that need to be done.

The Tensor Cores are specialized ALUs that handle matrix operations. Matrices are 'square' information arrays and Tensor cores work on four x 4 matrices. They are designed to handle FP16, INT8 or INT4 data components in such a way that in ane clock cycle, up to 64 FMA (fused multiply-then-add) float operations take place. This type of calculation is commonly used in so-called neural networks and inferencing -- not exactly very mutual in 3D games, but heavily used by the likes of Facebook for their social media analyzing algorithms or in cars that have self-driving systems. Navi is also able to do matrix calculations but requires a large number of SPs to do then; in the Turing organization, matrix operations can be done while the CUDA cores are doing other math.

The RT Cadre is another special unit, unique to the Turing architecture, that performs very specific math algorithms that are used for Nvidia'due south ray tracing arrangement. A full analysis of this is beyond the scope of this article, but the RT Cadre is substantially 2 systems that work separately to the rest of the SM, so it can still work on vertex or pixel shaders, while the RT Core is decorated doing calculations for ray tracing.

On a key level, Navi and Turing have execution units that offer a reasonably similar feature fix (a necessity built-in out of needing to comply with the requirements of Direct3D, OpenGL, etc.) but...

On a fundamental level, Navi and Turing take execution units that offer a reasonably like feature prepare (a necessity born out of needing to comply with the requirements of Direct3D, OpenGL, etc.) simply they have a very different approach to how these features are processed. As to which design is ameliorate all comes down to how they become used: a plan that generates lots of threads performing FP32 vector calculations and little else would seem to favor Navi, whereas a program with a variety of integer, float, scalar and vector calculations would favor the flexibility of Turing, and and so on.

The Memory Hierarchy

Modern GPUs are streaming processors, that is to say, they are designed to perform a gear up of operations on every element in a stream of data. This makes them less flexible than a general purpose CPU and it also requires the retentiveness hierachy of the chip to be optimized for getting information and instructions to the ALUs equally quickly every bit possible and in as many streams as possible. This means that GPUs will have less enshroud than a CPU as the more than of the chip needs to be dedicated to cache admission, rather the amount of cache itself.

Both AMD and Nvidia resort to using multiple levels of cache within the fries, so let'due south have peek at what Navi packs first.

Starting at the everyman level in the hierarchy, the ii blocks of Stream Processors utilise a total of 256 kiB of vector general purpose registers (generally called a register file), which is the same amount as in Vega merely that was beyond four SP blocks; running out of registers while trying to process a large number of threads really hurts performance, so this is definitely a "good thing." AMD has greatly increased the scalar register file, besides. Where it was previously only 4 kiB, it's at present 32 kiB per scalar unit.

2 Compute Units then share a 32 kiB instruction L0 cache and a 16 kiB scalar data cache, but each CU gets its own 32 kiB vector L0 cache; connecting all of this retention to the ALUs is a 128 kiB Local Data Share.

In Navi, two Compute Engines form a Workgroup Processor, and v of those course an Asynchronous Compute Engine (ACE). Each ACE has access to its own 128 kiB of L1 cache and the whole GPU is further supported past 4 MiB of L2 enshroud, that'southward interconnected to the L1 caches and other sections of the processor.

This is almost certainly a course of AMD's proprietary Infinity Fabric interconnect architecture as the system is definitely employed to handle the 16 GDDR6 memory controllers. To maximize memory bandwidth, Navi too employs lossless color compression betwixt L1, L2, and the local GDDR6 retention.

Again, all of this is welcome, especially when compared to previous AMD chips which didn't take enough low level enshroud for the number of shader units they independent. In brief, more cache equals more internal bandwidth, fewer stalled instructions (considering they're having to fetch data from retentivity farther abroad), and so on. And that simply equals better performance.

Onto Turing's hierarchy, information technology has to be said that Nvidia is on the shy side when it comes to providing in-depth information in this area. Before in this article, we saw that each SM was split into 4 processing blocks -- each one of those has a 64 kiB register file, which is smaller than found in Navi, but don't forget that Turing's ALUs are scalar, not vector, units.

Next upwards is 96 kiB of shared retention, for each SM, which tin be employed as 64 kiB of L1 information cache and 32 kiB of texture cache or actress register space. In 'compute fashion', the shared memory tin be partitioned differently, such as 32 kiB shared retentiveness and 64 kiB L1 cache, but information technology's always done as a 64+32 split.

The lack of particular given about the Turning retentiveness arrangement left us wanting more than, so we turned to a GPU research team, working at Citadel Enterprise Americas. Of belatedly, they have released two papers, analyzing the finer aspects of the Volta and Turing architectures; the prototype above is their breakdown of the memory hierarchy in the TU104 scrap (the total TU102 sports 6144 kiB of L2 cache).

The squad confirmed that the L1 cache throughput is 64 bits per bicycle and noted that under testing, the efficiency of Turing's L1 cache is the best of all Nvidia's GPUs. This is on par with Navi, although AMD's chip has a college read rate to the Local Data Shop but a lower rate for the instruction/constant caches.

Both GPUs use GDDR6 for the local memory -- this is the most recent version of Graphics DDR SDRAM -- and both use 32-bit connections to the retentivity modules, and then a Radeon RX 5700 XT has 8 memory chips, giving a meridian bandwidth of 256 GiB/southward and 8 GiB of infinite. A GeForce RTX 2080 Ti with a TU102 scrap, runs with 11 such modules for 352 GiB/s of bandwidth and eleven GiB of storage.

AMD's documents can seem to be disruptive at times: in the first block diagram we saw of Navi, it shows four 64 bit retentiveness controllers, whereas a afterward image suggests there are 16 controllers. Given that the likes of Samsung only offer 32 bit GDDR6 memory modules, it would seem that the 2d image just indicates how many connections there are betwixt the Infinity Textile system and the retentiveness controllers. At that place probably are just four memory controllers and each one handles two modules.

So overall, there doesn't seem to be an enormous corporeality of difference betwixt Navi and Turing when it comes to their caches and local memory. Navi has a picayune more than Turing nearer the execution side of things, with larger education/constant and L1 caches, but they're both packed total of the stuff, they both use color compression wherever possible, and both have lots of dedicated GPU die space to maximize retention access and bandwidth.

Triangles, Textures and Pixels

Fifteen years ago, GPU manufacturers fabricated a big deal of how many triangles their fries could process, the number of texture elements that could exist filtered each cycle, and the capability of the render output units (ROPs). These aspects are still of import today just every bit 3D rendering technologies require far more than compute performance than e'er before, the focus is much more on the execution side of things.

However the texture units and ROPs are even so worth investigating, if only to note that at that place is no immediately discernible difference between Navi and Turing in these areas. In both architectures, the texture units can address and fetch 4 texture elements, bilinearly filter them into one element, and write it into cache all in 1 clock cycle (disregarding any boosted clock cycles taken for fetching the data from local retention).

The arrangement of the ROP/RBs is a little different between Navi and Turing, but not by much: the AMD chip has 4 RBs per ACE and each ane can output 4 blended pixels per clock cycle; in Turing, each GPC sports ii RBs, with each giving 8 pixels per clock. The ROP count of a GPU is really a measurement of this pixel output charge per unit, so a full Navi fleck gives 64 pixels per clock, and the full TU102 gives 96 (but don't forget that it's a much bigger chip).

On the triangle side of things, there's less immediate information. What we exercise know is that Navi still outputs a maximum of 4 primitives per clock cycle (ane per ACE) merely there'due south nothing still as to whether or not AMD accept resolved the issue pertaining to their Primitive Shaders. This was a much touted feature of Vega, allowing programmers to accept far more than command over primitives, such that it could potentially increase the primitive throughput by a gene of 4. However, the functionality was removed from drivers at some point not long after the production launch, and has remained fallow always since.

While we're still waiting for more information about Navi, it would be unwise to speculate further. Turing also processes 1 primitive per clock per GPC (and so upward to 6 for the full TU102 GPU) in the Raster Engines, just it also offers something chosen Mesh Shaders, that offers the same kind of functionality of AMD's Archaic Shaders; information technology's not a feature set of Direct3D, OpenGL or Vulkan, but tin be used via API extensions.

This would seem to exist giving Turing the edge over Navi, in terms of handling triangles and primitives, but at that place's non quite plenty data in the public domain at this moment in time to exist sure.

It'south Not All About the Execution Units

At that place are other aspects to Navi and Turing that are worth comparing. To first with, both GPUs have highly adult display and media engines. The onetime handles the output to the monitor, the latter encodes and decodes video streams.

Equally you'd expect from a new 2022 GPU design, Navi's display engine offers very loftier resolutions, at high refresh rates, and offers HDR back up. Display Stream Compression (DSC) is a fast lossy compression algorithm that allows for the likes of 4K+ resolutions at refresh rates more than 60 Hz to exist transmitted over i DisplayPort 1.4 connection; fortunately the epitome quality degradation is very small, almost to the betoken that you lot'd consider DSC nearly lossless.

Turing also supports DisplayPort with DSC connections, although the supported high resolution and refresh charge per unit combination is marginally better than in Navi: 4K HDR is at 144 Hz -- but the residual is the same.

Navi's media engine is just as modern as its display engine, offering back up for Avant-garde Video Coding (H.264) and High Efficiency Video Coding (H.265), again at high resolutions and high bitrates.

Turing's video engine is roughly the aforementioned every bit Navi'southward only the 8K30 HDR encoding support may tip the remainder in favor of Turing for some people.

There are other aspects to compare (Navi'due south PCI Express four.0 interface or Turing'due south NV Link, for instance) simply they're really just very small parts of the overall compages, no thing how much they get dressed upward and marketed. This is simply because, for the vast majority of potential users, these unique features aren't going to affair.

Comparing Like-for-Similar

This commodity is an ascertainment of architectural design, features and functionality, just having a direct performance comparison would exist a skillful style to circular up such an analysis. However, matching the Navi fleck in a Radeon RX 5700 XT against the Turing TU102 processor in a GeForce RTX 2080 Ti, for example, would be distinctly unfair, given that the latter has well-nigh twice the number of unified shader units as the former. However, there is a version of the Turing chip that can be used for a comparison and that'southward the one in the GeForce RTX 2070 Super.

| Radeon RX 5700 XT | GeForce RTX 2070 Super | |

| GPU | Architecture | Navi ten | RDNA | TU104 | Turing |

| Process | 7 nm TSMC | 12 nm TSMC |

| Die area (mm2) | 251 | 545 |

| Transistors (billions) | x.3 | 13.6 |

| Block profile | two SE | 4 ACE | 40 CU | 5 GPC | twenty TPC | 40 SM |

| Unified shader cores | 2560 SP | 2560 CUDA |

| TMUs | 160 | 160 |

| ROPs | 64 | 64 |

| Base clock | 1605 MHz | 1605 MHz |

| Game clock | 1755 MHz | N/A |

| Boost clock | 1905 MHz | 1770 MHz |

| Retentivity | 8GB 256-bit GDDR6 | 8GB 256-bit GDDR6 |

| Memory bandwidth | 448 GBps | 448 GBps |

| Thermal Design Power (TDP) | 225 W | 215 Due west |

It'south worth noting that the RTX 2070 Super is not a 'full' TU104 fleck (ane of the GPCs is disabled), so not all of those xiii.6 transistors are agile, which ways the fries are roughly the aforementioned in terms of transistor count. At face value, the two GPUs seem very similar, particularly if you just consider number of shader units, TMUs, ROPs, and the main memory systems.

In the Nvidia processor, one SM can handle 32 concurrent warps and with each warp consisting of 32 threads, a fully loaded GeForce RTX 2070 Super tin can work on 40,960 threads beyond the whole fleck; for Navi, one CU can accept up to sixteen waves per SIMD32 ALU, with each wave being 32 threads. And then the Radeon RX 5700 XT can also be packed with upwardly to 40,960 threads. This would seem to make them exactly even here, but given how differently the CU/SMs are arranged, and Nvidia's advantage with concurrent INT and FP processing, the end upshot volition depend heavily on the code beingness run.

This volition have an touch on how various games performance considering one 3D engine'southward lawmaking will favor ane structure better than the other, depending on what types of instructions are routinely sent to the GPU. This was evident when nosotros tested the two graphics cards:

All of the games used in the test were programmed for AMD'due south GCN architecture, whether directly for Radeon equipped PCs or through the GCN GPUs found in the likes of the PlayStation four or Xbox Ane. Information technology'southward possible that some of the more recently released ones could have prepped for RDNA's changes, but the differences seen in the benchmark results are more than likely due to the rendering engines and the way the instructions and data are beingness handled.

Then what does this all mean? Is one architecture really improve than the other? Turing certainly offers more capability than Navi thanks to its Tensor and RT Cores, but the latter certainly competes in terms of 3D rendering performance. The differences seen in a 12 game sample but aren't conclusive enough to make whatever definitive judgment.

And that is good news for united states.

Terminal Words

AMD's Navi plans were announced dorsum in 2022, and although they didn't say very much back so, they were aiming for a 2022 launch. When that date came and went, the roadmap changed to 2022, but it was clear that Navi would be manufactured on a 7nm procedure node and the design would focus on improving performance.

That has certainly been the case and as we've seen in this article, AMD made architectural changes to allow it to compete aslope equivalent offerings from Nvidia. The new design benefits more than than only PC users, as we know that Sony and Microsoft are going to utilise a variant of the chip in the forthcoming PlayStation 5 and next Xbox.

If you go back towards the offset of this article and look again at the structural design of the Shader Engines, as well as the overall dice size and transistor count, at that place is clearly telescopic for a 'big Navi' flake to go in a top-terminate graphics carte; AMD have pretty much confirmed that this is function of their current plans, as well as aiming for a refinement of the architecture and fabrication process within the next ii years.

But what virtually Nvidia, what are their plans for Turing and its successor? Surprisingly, very little has been confirmed by the company. Back in 2022, Nvidia updated their GPU roadmap to schedule the Pascal architecture for a 2022 launch (and met that target). In 2022, they announced the Tesla V100, using their Volta architecture, and it was this blueprint that spawned Turing in 2022.

Since then, things have been rather repose, and we've had to rely on rumors and news snippets, which are all generally saying the same matter: Nvidia's next architecture will be called Ampere, information technology will be fabricated by Samsung using their 7nm process node, and it'south planned for 2022. Other than that, there'south zippo else to go on. It's highly unlikely that the new chip will break tradition with the focus on scalar execution units, nor is it probable to drop aspects such as the Tensor Cores, as this would cause significant backwards compatibility issues.

Nosotros can brand some reasoned guesses about what the next Nvidia GPU will be like, though. The visitor has invested a notable amount of time and money into ray tracing, and the back up for it in games is just going to increase; so we can expect to see an improvement with the RT cores, either in terms of their capability or number per SM. If we presume that the rumor about using a 7 nm process node is true, then Nvidia will probably aim for a power reduction rather than outright clock speed increase, so that they tin increment the number of GPCs. Information technology's also possible that 7 nm is skipped, and Nvidia heads directly for 5 nm to gain an edge over AMD.

And information technology looks like AMD and Nvidia volition be facing new competition in the discrete graphics card market place from Intel, as we know they're planning to re-enter this sector, after a twenty yr hiatus. Whether this new production (currently named Xe) will able to compete at the aforementioned level every bit Navi and Turing remains to be seen.

Meanwhile Intel has stayed alive in the GPU market throughout those ii decades by making integrated graphics for their CPUs. Intel's latest GPU, the Gen 11, is more than similar AMD'south architecture than Nvidia'due south equally it uses vector ALUs that tin procedure FP32 and INT32 data, but we don't know if the new graphics cards will be a direct development of this blueprint.

What is certain is that the next few years are going to be very interesting, every bit long as the three giants of silicon structures continue to battle for our wallets. New GPU designs and architectures are going to push button transistor counts, cache sizes, and shader capabilities; Navi and RDNA are the newest of them all, and have shown that every footstep frontward, however small, can make a huge difference.

Shopping Shortcuts:

- GeForce RTX 2070 Super on Amazon

- GeForce RTX 2080 Super on Amazon

- GeForce RTX 2080 Ti on Amazon

- Radeon RX 5700 XT on Amazon

- Radeon RX 5700 on Amazon

- GeForce RTX 2060 Super on Amazon

- GeForce GTX 1660 Super on Amazon

This commodity was originally published in August vii, 2022. We've slightly revised information technology and bumped information technology every bit part of our #ThrowbackThursday initiative.

Source: https://www.techspot.com/article/1874-amd-navi-vs-nvidia-turing-architecture/

Posted by: hoardfirents90.blogspot.com

0 Response to "Navi vs. Turing: An Architecture Comparison"

Post a Comment